Affiliate disclosure: Some links in this article are affiliate links. We may earn a commission if you sign up at no extra cost to you.

Who This Is For This guide is for professionals — consultants, lawyers, healthcare workers, finance professionals, and anyone handling sensitive data — who want the power of AI without sending their information to a third-party cloud server.

What Is Ollama?

Ollama is a free, open-source tool that lets you download and run powerful AI language models directly on your own computer. No internet connection required. No subscription. No data leaving your machine.

Think of it as having your own private version of ChatGPT running entirely on your laptop or desktop — completely offline if you choose.

If you’re new to AI assistants, start with our Claude AI review or ChatGPT review before diving into local models.

Why Run AI Locally?

There are three main reasons professionals choose local AI over cloud-based tools:

Privacy. Every prompt you type into ChatGPT, Claude, or Gemini is sent to a company’s servers. For lawyers, doctors, consultants, and finance professionals handling confidential information, that’s a significant concern. With Ollama, your data never leaves your machine.

Cost. After the initial setup, running AI locally is completely free. No monthly subscription, no usage limits, no per-token charges.

Control. You choose which model to run, when to update it, and how to configure it. No feature changes pushed by a vendor, no service outages, no policy changes affecting what the AI will or won’t do.

What You Need Before Starting

Before installing Ollama, check that your computer meets these basic requirements:

- Operating system: Mac (Apple Silicon or Intel), Windows 10/11, or Linux

- RAM: Minimum 8GB — 16GB or more recommended for better performance

- Storage: At least 10GB of free disk space for models

- Internet: Only needed for the initial download — not required to run models afterward

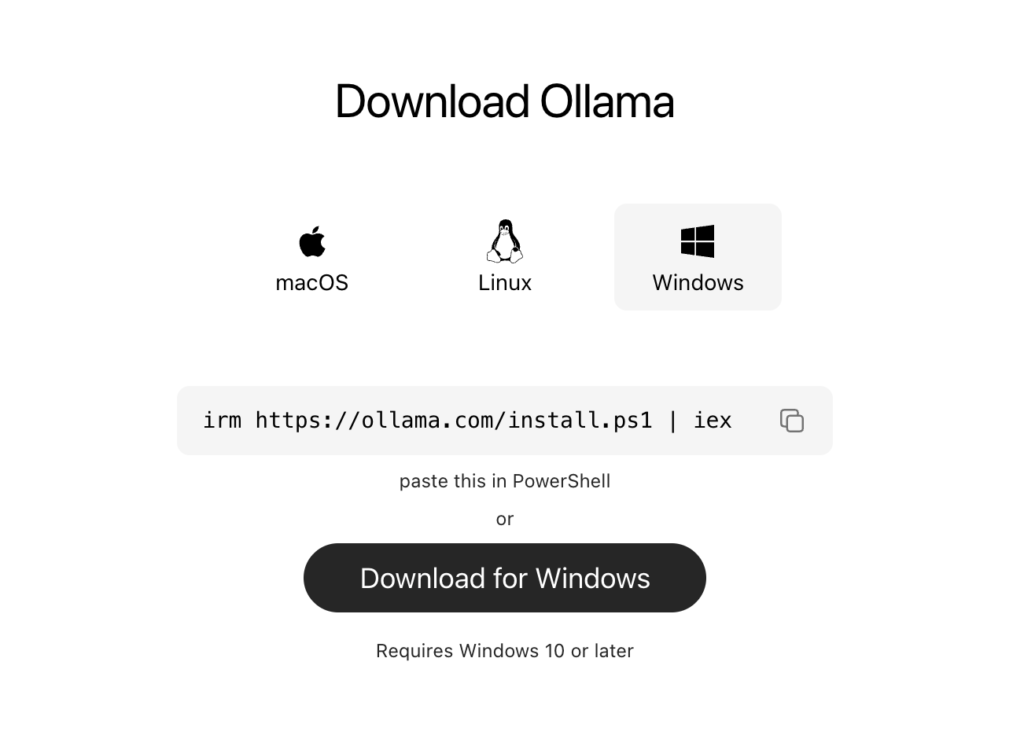

Step 1 — Download and Install Ollama

- Go to ollama.com

- Click the Download button for your operating system

- Run the installer — it takes about two minutes

- Ollama runs quietly in the background once installed — you’ll see a small icon in your menu bar or system tray

Step 2 — Download Your First AI Model

Ollama works with dozens of open-source AI models. For professionals new to local AI, we recommend starting with one of these:

| Model | Size | Best For |

|---|---|---|

| Llama 3.2 | 2GB | Fast, general use, good for most tasks |

| Mistral | 4GB | Strong reasoning and writing |

| Phi-3 | 2GB | Lightweight, excellent for older hardware |

| Llama 3.1 70B | 40GB | Most capable, requires powerful hardware |

To download a model:

- Open your Terminal (Mac/Linux) or Command Prompt (Windows)

- Type:

ollama pull llama3.2and press Enter - Wait for the download to complete — this takes a few minutes depending on your internet speed

Step 3 — Start Chatting

Once your model is downloaded, running it is simple:

- In Terminal or Command Prompt type:

ollama run llama3.2 - Press Enter

- Type your prompt and press Enter

- The AI responds directly in your terminal

Example prompt to try: Summarize the key risks in a standard NDA agreement in plain English.

Step 4 — Add a Better Interface (Optional)

The terminal works but isn’t the most comfortable way to chat with an AI. Several free tools add a proper chat interface on top of Ollama:

Open WebUI — the most popular option. It gives you a ChatGPT-style interface running entirely on your own machine.

To install Open WebUI:

- Install Docker Desktop on your computer

- Open Terminal and paste this command:

docker run -d -p 3000:8080 --add-host=host.docker.internal:host-gateway -v open-webui:/app/backend/data --name open-webui --restart always ghcr.io/open-webui/open-webui:main- Open your browser and go to

http://localhost:3000 - You’ll see a full chat interface connected to your local Ollama models

Visual suggestion: Add a screenshot of the Open WebUI interface here — it looks similar to ChatGPT and will resonate strongly with readers.

If you would like to learn more, visit the Open WebUI GitHub page.

Practical Use Cases for Professionals

Here are real-world ways professionals are using Ollama today:

Legal professionals: Drafting contract summaries, reviewing NDAs, and researching case precedents — all without sending client information to a third-party server.

Healthcare workers: Summarizing medical literature and drafting patient communications locally — keeping patient data fully private.

Finance professionals: Analyzing financial documents and generating reports without exposing sensitive client data to cloud services.

Consultants: Drafting proposals, summarizing research, and preparing presentations on confidential client projects.

For cloud-based AI tools that work well for consultants, see our Best AI Tools for Consultants guide.

Limitations to Know

Ollama and local AI models are powerful but have real limitations compared to cloud tools:

Performance depends on your hardware. Larger, more capable models require more RAM and processing power. On older hardware, responses can be slow.

No real-time web access. Local models don’t browse the internet — they only know what was in their training data. For current information, cloud tools like Perplexity are still needed.

Setup requires some comfort with Terminal. The installation is straightforward but involves command-line steps that may be unfamiliar to some users. The Open WebUI option removes this barrier after initial setup.

For professionals who need real-time web search, see our Perplexity AI review.

Our Verdict

Ollama is one of the most underrated tools available to professionals in 2026. If you handle any sensitive or confidential information in your work, the ability to run AI privately on your own machine is genuinely valuable — and the fact that it’s completely free makes it even more compelling.

The setup takes about 15 minutes. After that you have a powerful, private AI assistant that costs nothing to run.

Rating: 4.6 / 5

Download Ollama free at ollama.com

Quick Reference — Most Useful Ollama Commands

| Command | What It Does |

|---|---|

ollama pull llama3.2 | Downloads the Llama 3.2 model |

ollama run llama3.2 | Starts a chat session |

ollama list | Shows all downloaded models |

ollama rm llama3.2 | Removes a model to free up space |

Browse our full AI Tools Directory to find more tools that fit your professional workflow. Or compare cloud-based options in our Claude review, ChatGPT review, and Gemini review.